Since the YaST team rewrote the software stack for managing the storage devices, we have been adding and presenting new capabilities in that area regularly. That includes, among other features, the unpaired ability to format and partition all kind of devices and the possibility of creating and managing Bcache devices. Time has come to present another largely awaited feature that is just landing in openSUSE Tumbleweed: support for multi-device Btrfs file systems.

As our usual readers surely know, Btrfs is a modern file system for Linux aimed at implementing advanced features that go beyond the scope and capabilities of traditional file systems. Such capabilities include subvolumes (separate internal file system roots), writable and read-only snapshots, efficient incremental backup and our today’s special: support for distributing a single file system over multiple block devices.

Multi-device Btrfs at a glance

Ok, you got it. YaST now supports multi-device Btrfs file system… but, what that exactly means? Well, as simple as it sounds, it’s possible to create a Btrfs file system over several disks, partitions or any other block devices. Pretty much like a software-defined RAID. In fact, you can use it to completely replace software RAIDs.

Let’s see an example. Imagine you have two disks, /dev/sda and /dev/sdb, and you also have some partitions on the first disk. You can create a Btrfs file system over some devices at the same time, e.g., over /dev/sda2 and /dev/sdb, so you will have a configuration that looks like this.

/dev/sda /dev/sdb

| |

| |

--------------- |

| | |

| | |

/dev/sda1 /dev/sda2 |

| |

| |

---------------

|

Btrfs

|

|

@ (default subvolume)

|

|

-----------------------

| | | |

| | | |

@/home @/log @/srv ...

Once you have the file system over several devices, you can configure it to do data striping, mirroring, striping + mirroring, etc. Basically everything that RAID can do. In fact, you can configure how to treat the data and the Btrfs meta-data separately. For example, you could decide to do striping with your data (by setting the data RAID level to the raid0 value) and to do mirroring with the Btrfs meta-data (setting it as raid1 level). For both, data and meta-data, you can use the following levels: single, dup, raid0, raid1, raid10, raid5 and raid6.

The combination of this feature and Btrfs subvolumes opens an almost endless world of possibilities. It basically allows you to manage your whole storage configuration from the file system itself. Usage of separate tools and layers like software-defined RAID or LVM are simply dispensable when using Btrfs in all its glory.

Managing multi-device Btrfs with the YaST Partitioner

Interesting feature indeed, but where to start? As usual, YaST brings you the answer! Let’s see how the YaST version that is currently being integrated in openSUSE Tumbleweed will ease the management of this cool Btrfs feature. SLE and Leap users will have to wait to the next release (15.2) to enjoy all the bells and whistles.

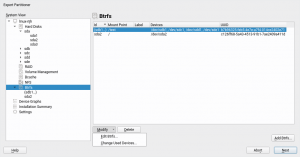

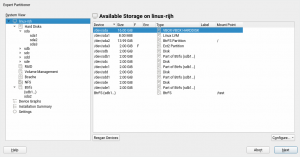

First of all, the Btrfs section of our beloved Expert Partitioner has been revamped as shown in the following picture.

It lists all the Btrfs file systems, single- and multi-device ones. You can distinguish them at first sight by the format of the name. The table contains the most relevant information about the file systems, alongside buttons to add a new file system and to delete and modify the existing ones.

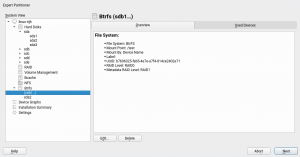

Existing Btrfs file system can be inspected and modified in several ways. The “Overview” tab includes details like the mount point, file system label, UUID, data and meta-data RAID levels, etc. The file system can be edited to modify some aspects like the mount options or the subvolumes.

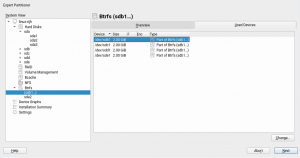

In addition, the tab called “Used Devices” contains a detailed list of the block devices being used by the file system. That list can also be modified to add or remove devices. Note such operation can only be done when the file system does not exist on disk yet. Theoretically, Btrfs allows to add and remove devices from an already created file system, but a balancing operation would be needed after that. Such balancing operation could take quite a considerable amount of time. For that reason it has been avoided in the Expert Partitioner.

Of course, you can still format a single device as Btrfs in the traditional way (using the “edit” button for such device). But let’s see how the new button for adding a Btrfs file system opens new possibilities.

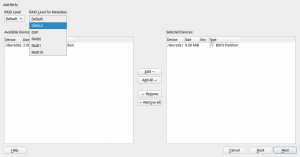

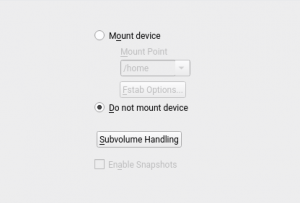

Similar to the RAID dialog, you have the available devices on the left and you can select the devices where you want to create the file system, and also you can indicate the data and meta-data RAID levels. Of course, the admissible RAID levels will depend on the number of selected devices. You will go to the second step of the Btrfs creation by clicking the “Next” button. In this second step, you can select the mount options and define the subvolumes, see the next image.

And apart of all that, the Expert Partitioner has received several small improvements after including multi-device Btrfs file systems. Now that the multi-device Btrfs file systems are considered first class citizens, they are included in the general list of devices. Note the “Type” column has also been improved to show more useful information, not only for Btrfs but for all kind of devices.

What else works?

But YaST goes far beyond the Partitioner. We have also ensured the storage proposal (i.e. the Guided Setup) can deal with existing multi-device Btrfs configurations when you are performing a new installation. Moreover, the upgrade process is also ready to work with your multi-device Btrfs file system.

Last but not least, AutoYaST can now also be used to specify that kind of Btrfs setups. The official AutoYaST documentation will include a specific section about advanced management of Btrfs file systems on top of several block devices. The content is being reviewed by the SUSE documentation team right now.

What does not work (yet)?

There is still one scenario that is not 100% covered. As described in bug#1137997, is still not possible to use the “Import Mount Points” button in the Partitioner to recreate a multi-device Btrfs layout. But fear not, it’s in our list of things to fix in the short term!

Get it while it’s hot

Free Software development is a collaborative process and now we need YOU to do your part. Please test this new feature and report bugs if something does not work as you expected. And please, come with your ideas and further improvements and use cases. And, of course, don’t forget to have a lot of fun!

Both comments and pings are currently closed.

Very Cool.

Aside from quibbling about paired/unimpaired and dispensable/indispensable in the article, if BTRFS wants to provide full RAID replacement capability, should support RAID 01 as well.

Although I have religiously avoided software RAID in favor of hardware, this sounds interesting enough to experiment with. Will be looking for full documentation on not just setup but management, breaking sets and recovery. And, possibly guidance on using dissimilar block devices and if there are limitations, particularly in numbers of spindles.

Even if the article sounds a bit enthusiastic about the Btrfs capabilities, is not YaST (or YaST Team) intention to endorse any concrete technology. The goal of the Partitioner is to offer the same level of support for MD RAID, LVM, Bcache and Btrfs and to make easy to use every technology on its own or to combine all of them into a single setup.

In other words, despite the goal of Btrfs developers to include LVM and/or RAID capabilities at file system level so you can live without those other technologies, Btrfs is (still) not a full replacement and there may be situations in which the usage of the most mature LVM/RAID is preferred.

It depends on the use case, but as you mentioned, is probably worth a try and some experiments. We encourage to have fun with it!

If you want to go beyond the Btrfs capabilities offered by YaST (that are admittedly a very small subset of all what Btrfs can do), the official Btrfs wiki would be a good place to start. https://btrfs.wiki.kernel.org

Does that mean btrfs RAID 5/6 is now finally stable, or can you still expect it to eat your data?

No, we don’t consider RAID 5/6 in Btrfs to be “enterprise ready”, so to speak. If you look to the screenshot illustrating the creation of a new Btrfs you will notice YaST only offers “single”, “dup”, “RAID0”, “RAID1” and “RAID10” as possible RAID levels.

Raid 5 and 6 are technically implemented in some of the tools offered by the distribution and they can be used. But you will have to use the native Btrfs tools to configure it, instead of YaST.

Those Btrfs RAID levels are not officially supported in SUSE Linux Enterprise, which means if you use them you are basically on your own. Of course, it’s also available in openSUSE Tumbleweed and Leap, but with the same level of stability (that is, use it at your own risk).

Well I can report a first successful experiment of what I’d consider one common scenario, although it’s not supported by YaST.

Objective –

New Install Tumbleweed on BTRFS RAID 10 using VMWare Workstation 15

Obstacle 1

VMware does not support installing a new machine on more than one block device, so the most direct scenario is not possible. But, it means that another related scenario can now be explored, converting a default TW install from a BTRFS root fs on a single disk to a RAID10 on multiple disks after install.

Step 1 install TW latest with intention to use YaST Partitioner as much as possible.

Then add 4 empty virtual disks after install.

Obstacle 2

Found that YaST Partitioner cannot add block devices to the mounted root file system device.

Seems YaST can create a new PROFILE (RAID Level and device group) with anything that’s not root, without further investigation don’t know if it’s because root is mounted or it’s just root. Since I cannot expand/add to the existing BTRFS fs using YaST, what follows can be done only with BTRFS tools.

Step 2 (or Step 1 using BTRFS tools)

Display BTRFS on current system, note that “filesystem” can be abbreviated “fi”‘

btrfs fi show

Label: none uuid: 9caca3c8-8b24-4754-bc44-1d4dcdf0ec4f

Total devices 5 FS bytes used 3.93GiB

devid 1 size 18.62GiB used 5.02GiB path /dev/sda2

devid 2 size 20.00GiB used 0.00B path /dev/sdb

devid 3 size 20.00GiB used 0.00B path /dev/sdc

devid 4 size 20.00GiB used 0.00B path /dev/sdd

devid 5 size 20.00GiB used 0.00B path /dev/sde

Note that devid 1 has something on it from my early experimentation creating a btrfs filesystem without RAID, and is why I will have to use “-f” to force striping in the following command. Am skipping my attempt without forcing which threw a surprising “No space left on device” error (which of course isn’t true)

Step 3 Execute conversion to RAID10

btrfs balance start -dconvert=raid10 -mconvert=raid10 /

Done, had to relocate 9 out of 9 chunks

Step 4

Success! The following command verifies

btrfs fi df .

Data, RAID10: total=8.00GiB, used=3.78GiB

System, RAID10: total=64.00MiB, used=16.00KiB

Metadata, RAID10: total=5.00GiB, used=159.41MiB

GlobalReserve, single: total=16.00MiB, used=16.00KiB

Have you been able to solve the fundamental problem of btrfs “raid”?

That is that instead of provicing a rubust, redundant array of individual disks, it provides a “broken multi-disk setup” whenever a disk fails or gets replaced phyisically, which requires manual fixing.

Have you been able to automate this with auxiliary scripts?

I mean will the filesystem continue to work (whithout manual intervention) when a drive fails or is removed during operation or while the machine was turned off, so that the redundancy is reduced, but operation is still possible?

Will btrfs start duplicating files on a single remaining disk in a strange attempt to “maintain data redundancy”?

And will the filesystem automatically re-sync a working spinning hard drive, that has been removed or turned off, with an SSD say in a laptop, after the hard drive has been plugged in or turned on again?

I would suggest exploring your scenario in a virtual machine (any virtualization including Virtualbox, VMware, KVM, etc) to be familiar with what happens and what to do.

Any questions can be posted to the Technical Help Forums (Installation)

I’ve collected and posted links to better sources of BTRFS info (IMO)

Although is quite a bit of info and different sources to read, IMO it covers what I feel is the most important info authoritatively and most common scenarios

https://en.opensuse.org/User:Tsu2/systemd-1#BTRFS

From one of the links in my above Wiki,

I haven’t yet found anything new, better or different for creating, modifying adding or replacing a disk or otherwise managing a degraded array than what is in the BTRFS Wiki

https://btrfs.wiki.kernel.org/index.php/Using_Btrfs_with_Multiple_Devices

Yes, I agree there is a future for YaST module(s) that can simplify BTRFS RAID management beyond initial setup.

Thank you for sharing your btrfs insights.

It’s really unfortunate the btrfs “raid” seems to behave that differently than what one would expect from raid device.

With the udev rules and policy settings in mdraid, it creates no need for “managing a degraded array”. If enabled, detatching and attaching back is a hot-plug non-issue. So maybe the btrfs could also be solved with just some udev rules hooking on device events.