I can code with javascript and I’m fairly good at it (not marvelous just brialiant!). If you have read some of my resent blog post I think in C/C++/Perl or Bash. I also have some kind of a hobby to help out with UnReal world RPG game. Mostly my part is to make it work with *nix platform (mainly Linux and Mac OS X).

As we have seen world is moving fast forward towards web. It’s the-place-to-be for everyone. There is huge potential for players just wandering around and yelling for pleasure to play UrW! So I thought let’s see if we could port SDL to javascript/Flash or something straight from same source. after tiny amount of searching I popped up Emscripten. (more…)

Archive for March, 2014

Emscripten and openSUSE: Hands on.. Hands up!

March 24th, 2014 by Tuukka PasanenProprietary AMD/ATI Catalyst fglrx rpms released

March 23rd, 2014 by Bruno FriedmannProprietary AMD/ATI Catalyst fglrx rpms released

Patience is a virtue, especially with AMD gpu drivers, but today is the FGLRX day!

Yesterday Sebastian Siebert has published new versions for almost everything (except legacy dead horse).

So after 3 versions of the driver, compiled for 6 versions of openSUSE, under 2 arch, the rpms have been published today

Question : how many compilation does that mean? 🙂

Résumé

# # AMD fglrx standard (HD5xxx+ radeon gpu) 13.251-4

So we got a new build of the 13.12 standard version, including support for newer 3.14x kernel.Available for openSUSE 11.4 to 13.1 plus Tumbleweed

Informations & bugreport Sebastian’s blog

# # AMD fglrx standard BETA 14.3V1.0 (HD5xxx+ radeon gpu)

Sebastian refresh the script to build the last beta offered by AMD

If you feel brave enough to work them, the rpm are located at the repository address

Informations & bugreport Sebastian’s blog

# # NEW AMD fglrx unified for FirePro & FireMV gpu 13.251-1

Sebastian now offer also the support for the unified driver for FirePro & FireMV gpu.

The driver is also called fglrx, and you should not mix the different repository. So take care of that.

We create a new repository you could use.

Informations & bugreport Sebastian’s blog

Cloudy with a touch of Green

March 19th, 2014 by rjschweiFinally there is some news regarding our public cloud presence and openSUSE 13.1. We now have openSUSE 13.1 images published in Amazon EC2, Google Compute Engine, and Windows Azure.

Well, that’s the announcement, but would make for a rather short blog. Thus, let me talk a bit about how this all works and speculate a bit why we’ve not been all that good with getting stuff out into the public cloud.

Let me start with the speculation part, i.e. hindrances in getting openSUSE images published. In general to get anything into a public cloud one has to have an account. This implies that you hand over your credit card number to the cloud provider and they charge you for the resources you use. Resources in the public cloud are anything and everything that has something to do with data. Compute resources, i.e. the size of an instance w.r.t. memory and number of CPUs are priced differently. Sending data across the network to and from your instances incurs network charges and of course storing stuff in the cloud is not free either. Thus, while anyone can put an image into the cloud and publish it, this service costs the person money, granted not necessarily a lot, but it is a monthly recurring out of pocket expense.

Then there always appears to be the “official” apprehension, meaning if person X publishes an openSUSE image from her/his account what makes it “official”. Well first we have the problem that the “official” stamp is really just an imaginary hurdle. An image that gets published by me is no more or less “official” than any other images. I am after all not the release manager or have any of my fingers in the openSUSE release in any way. I do have access to the SUSE accounts and can publish from there and I guess that makes the images “official”. But please do not get any ideas about “official” images, they do not exist.

Last but not least there is a technical hurdle. Building images in OBS is not necessarily for the faint of heart. Additionally there is a bunch of other stuff that goes along with cloud images. Once you have one it still has to get into the cloud of choice, which requires tools etc.

That’s enough speculation as to why or why not it may have taken us a bit longer than others, and just for the record we did have openSUSE 12.1 and openSUSE 12.2 images in Amazon. With that lets talk about what is going on.

We have a project in OBS now, actually it has been there for a while, Cloud:Images that is intended to be used to build openSUSE cloud images. The GCE image that is public and the Amazon image that is public both came from this project. The Azure image that is currently public is one built with SUSE Studio but will at some point also stem from the Cloud:Images OBS project.

Each cloud framework has it’s own set of tools. The tools are separated into two categories, initialization tools and command line tools. The initialization tools are tools that reside inside the image and these are generally services that interact with the cloud framework. For example cloud-init is such an initialization tool and it is used in OpenStack images, Amazon images, and Windows Azure images. The command line tools let you interact with the cloud framework to start and stop instances for example. All these tools get built in the Cloud:Tools project in OBS. From there you can install the command line tools into your system and interact with the cloud framework they support. I am also trying to get all these tools into openSUSE:Factory to make things a bit easier for image building and cloud interaction come 13.2.

With this lets take a brief closer look at each framework, in alphabetical order no favoritism here.

Amazon EC2

An openSUSE 13.1 image is available in all regions, the AMI (Amazon Machine Image) IDs are as follows:

sa-east-1 => ami-2101a23c

ap-northeast-1 => ami-bde999bc

ap-southeast-2 => ami-b165fc8b

ap-southeast-1 => ami-e2e7b6b0

eu-west-1 => ami-7110ec06

us-west-1 => ami-44ae9101

us-west-2 => ami-f0402ec0

us-east-1 => ami-ff0e0696

These images use cloud-init as opposed to the “suse-ami-tools” that has been used previously and is no longer available in OBS. The cloud-init package is developed in launchpad and was started by the Canonical folks. Unfortunately to contribute you have to sign the Canonical Contributor Agreement (CCA). If you do not want to sign it or cannot sign it for company reasons you can still send stuff to the package and I’ll try and get the stuff integrated upstream. For the interaction with Amazon we have the aws-cli package. The “aws” command line client supersedes all the ec2-*-tools and is an integrated package that can interact with all Amazon services, not just EC2. It is well documented fully open source and hosted on github. The aws-cli package replaces the previously maintained ec2-api-tools package which I have removed from OBS.

Google Compute Engine

In GCE things work by name and the openSUSE 13.1 image is named opensuse131-20140227 and is available in all regions. Google images use a number of tools for initialization, google-daemon and google-startup-scripts. All the Google specific tools are in the Cloud:Tools project. Interaction with GCE is handled with two commands, gcutil and gsutil, both provided by the google-cloud-sdk package. As the name suggests google-cloud-sdk has the goal to unify the various Google tools, same basic idea as aws-cli, and Google is working on the unification. Unfortunately they have decided to do this on their own and there is no public project for google-cloud-sdk which makes contributing a bit difficult to say the least. The gsutil code is hosted on github, thus at least contributing to gsutil is straight forward. Both utilities, gsutil for storage and gcutil for interacting with GCE are well documented.

In GCE we also were able to stand up openSUSE mirrors. These have been integrated into our mirrorbrain infrastructure and are already being used quite heavily. The infrastructure team is taking care of the monitoring and maintenance and that deserves a big THANK YOU from my side. The nice thing about hosting the mirrors in GCE is that when you run an openSUSE instance in GCE you will not have to pay for network charges to pull your updated packages and things are really fast as the update server is located in the same data center as your instance.

Windows Azure

As mentioned previously the current image we have in Azure is based on a build from SUSE Studio. It does not yet contain cloud-init and only has WALinuxAgent integrated. This implies that processing of user data is not possible in the image. User data processing requires cloud-init and I just put the finishing touches on cloud-init this week. Anyway, the image in Azure works just fine, and I have no time line when we might replace it with an image that contains cloud-init in addition to WALinuxAgent.

Interacting with Azure is a bit more cumbersome than with the other cloud frameworks. Well, let me qualify this with, if you want packages. The Azure command line tools are implemented using nodejs and are integrated into the npm nodejs package system. Thus, you can use npm to install everything you need. The nodejs implementation provides a bit of a problem in that we hardly have a nodejs infrastructure in the project. I have started packaging the dependencies, but there is a large number and thus this will take a while. Who would ever implement….. but that’s a different topic.

That’s where we are today. There is plenty of work left to do. For example we should unify the “generic” OpenStack image in Cloud:Images with the HP specific one, the HP cloud is based on OpenStack, and also get an openSUSE image published in the HP cloud. There’s tons of packaging left to do for nodejs modules to support the azure-cli tool. It would be great if we could have openSUSE mirrors in EC2 and Azure to avoid network charges for those using openSUSE images in those clouds. This requires discussions with Amazon and Microsoft, basically we need to be able to run those services for free, which implies that both would become sponsors of our project just like Google has become a sponsor of our project by letting us run the openSUSE mirrors in GCE.

So if you are interested in cloud and public cloud stuff get involved, there is plenty of work and lots of opportunities. If you just want to use the images in the public cloud go ahead, that’s why they are there. If you want to build on the images we have in OBS and customize them in your own project feel free and use them as you see fit.

How to filter a certain class of hardware in dhcpd.conf

March 19th, 2014 by Bruno FriedmannTo prepare the end of the XP world

Atfer 8th April 2014, Windows XP system will be Like children in the lions’ den, if connected to internet

In a network around, all the still running XP are all vmware virtual machine, and none of the end-users are the right to modify the settings of the virtual machine, nor has administrative right under XP

The idea is the simply to just suppress the gateway of the network.

Playing with dhcpd.conf

There’s lot of way to handle this classification, but I discover that you need to find the right syntax, and understand how the binary-to-ascii function of dhcpd work.

First binary-to-ascii remove any leading 0, then we will just readd them 🙂

Extract of the dhcpd.conf

# binary-to-ascii remove leading 0 rebuild the complete MAC

set testmac = concat ( suffix (concat ("0", binary-to-ascii (16, 8, "", substring(hardware,1,1))),2), ":", suffix (concat ("0", binary-to-ascii (16, 8, "", substring(hardware,2,1))),2), ":", suffix (concat ("0", binary-to-ascii (16, 8, "", substring(hardware,3,1))),2), ":", suffix (concat ("0", binary-to-ascii (16, 8, "", substring(hardware,4,1))),2), ":", suffix (concat ("0", binary-to-ascii (16, 8, "", substring(hardware,5,1))),2), ":", suffix (concat ("0", binary-to-ascii (16, 8, "", substring(hardware,6,1))),2) );

# Extract the only first 8 chars

set testclass = substring(testmac, 0, 8);

# You will find a lot of this on internet but doesn't work

# set testmac = binary-to-ascii(16, 8, ":", substring(hardware, 1, 6));

# All our VMware VM use the same prefix

if ( testclass = "00:0c:29" ){

# put dummy router

option routers 127.0.0.1;

# useful debug log

log (info, "xp32 lease");

}else{

# Default gateway for anyone else

option routers 192.168.1.254;

log (info, "standard lease");

}

That’s all for today

osc build with kvm on an encrypted volume group

March 15th, 2014 by Bruno FriedmannHow-to build a initrd-virtio on a fully encrypted volume group

If like me you care about your data stored on your laptop, you certainly use a fully encrypted (excepted /boot) configuration based on lvm.

In my case I also like to create, build, fix packages locally with our tool osc. I’ve plenty of power, beefy ssd, so I dedicate a logical lvm for building cleanly package with qemu-kvm configuration, like obs does

Prepare the kvm building system

As root you create 2 lvm volume with lvcreate, one will be the build root, the other one will be the additional swap

In ~/.oscrc I enable the following parameters

build-type = kvm build-device = /dev/mapper/vg0-lvobsbuild build-swap = /dev/mapper/vg1-lvobsswap build-memory = 4096 build-vmdisk-rootsize = 16000 build-vmdisk-swapsize = 4000 build-vmdisk-filesystem = ext4

You just have to adjust the Memory quantity and the device to what you create for your own environment.

Help yourselves to our low hanging fruit!

March 13th, 2014 by calummaAn update on what we’re doing and a call for help!

What was going on

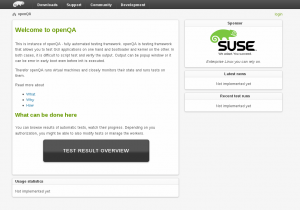

From our previous posts you probably know what do we do these days. We are working on our goal to make Factory more stable by using staging projects and openQA. Both projects are close to reaching important milestones. Regarding the osc plugin that is helping out with staging, we are in the state that coolo and scarabeus can manage Factory and staging projects using it. We did some work on covering it with tests and currently we are somewhere half-way there. Yes, there is a room for improvement (patches are welcome), but it is good enough for now. We are missing integration with OBS, automation and much more. But as the most important part of all this is integration with openQA, we decided to put this sprint on hold and focus even more on openQA.

How to play with it and help move this forward?

During our work, we found some tasks that are not blocking us but would be nice to have. Some of these are quite easy for people interested in diving in and making a difference in these projects. We put them aside in progress.opensuse.org to make it easilly recognizable that these tasks can be taken and are relatively easy. Let’s take a closer look at what tasks are there to play with. Of course – it would be very cool if, through using the new tools, you find out other things that improve the work flow!

- Helping staging. I already mentioned one way you can help our factory staging plugin.

- Improving test coverage. It might sound boring at first, but is a quite entertaining task. We have a short documentation explaining how tests work. In summary, we have a class simulating a limited subset of OBS and we just run commands and wait whether our fake OBS ends up in correct state. This makes writing tests much easier. To make it more interesting, we use Travis CI and Coveralls. Big advantage of those is that it does a test run on every pull request and shows you how you improved the test coverage. It also shows what is not covered yet. In other words, it shows what should be covered next 🙂

- What else needs to be done to make staging project more reliable is to fix all packages that are randomly failing. This is mostly packaging/obs debugging task. So doesn’t require any python and can be done package by package.

- Last thing on the staging projects todo list for now is making the staging api class into singletons. For those who know python this is actually really easy. But while at it, you will be taking a look at the rest of our code and we will definitely not mind anybody polishing it or removing bugs!

Helping openQA

In openQA, currently we have code cleaning tasks. It’s about polishing the code we have – like removing useless index controller and fixing code highlighting. Or doing simple optimizations – like caching image thumbnails.

In case of openQA, you might be a little confused where to send your code. We have been doing some short and mid term planning with Bernhard M. Wiedemann, the man behind openqa.opensuse.org and one of the original openQA developers, together with Dominik Heidler. Now the code considered to be ‘upstream’ have been moved to several repositories under the os-autoinst organization in github. This code contains all the improvements introduced to extensivelly test our beloved openSUSE 13.1. The next generation of the tool is being developed right now in a separate repository and will be merged into upstream as soon as we feel confident enough, which means more automated testing, more staging deployments and more code revisions and audits. If you want to help us, you are welcome to send pull requests to either of those repositories and someone will take a look at them.

What next?

As we wrote, we are currently putting all our effort into openQA. We already have basic authentication and authorization, we are working on tests, fixing bugs and making sure the service in general is easy to deploy. And of course we are working toward our main goal – having a new openQA instance with all cool features available in public. Currently it is already kind of easy to get your own instance running, so if you are web or perl developer, nothing should stop you from playing with it and making it more awesome!

Is my server alive and how good is my connection

March 10th, 2014 by Tuukka PasanenIf you have time to setup real solution and need something reliable test Zabbix (http://zabbix.com). Zabbix is wonderful all in one solutions for monitoring network and your hosts (servers). If you are in need of knowing how good you Internet/(W)LAN connections is then things are getting complicated. (more…)

Short report from Installfest 2014 Prague

March 6th, 2014 by calumma This week, Michal and Tomáš write about their visit of the Installfest 2014 in Prague.

This week, Michal and Tomáš write about their visit of the Installfest 2014 in Prague.

Installfest Prague is a local conference that despite it’s name has talk tracks and sometime even quite technical topics. We, Michal Hrušecký and Tomáš Chvátal, attended this event and spread the Geeko News around…

Linux for beginners workshop

Tomáš worked with local community member Amy Winston to show that our lovely openSUSE 13.1 is great for day-to-day usage, even (or especially) for people migrating from windows. We demonstrated KDE’s Plasma Desktop, how to use YaST to achieve common tasks and then asked the audience for specific requests about things to demonstrate. We can tell you the participants liked Steam a lot! Apart from the talking about and demonstrating openSUSE we also explained people that SUSE is selling SLE and they can buy it in the store if they want a rock-solid stable OS.

The workshop setup was a bit tricky because the machines didn’t have optical drives and we didn’t have enough usb sticks to work around the issue. Our solution was to boot up the ISO directly from PXE server and force NetworkManager to not start during the boot because it happily overwrote the network configuration and thus loosing the ISO it was booting from.

Factory workflow presentation

Over the last months, our team has been working on improving the way we develop openSUSE: theF Factory Workflow. You can read up on it in earlier blogs here. During the Installfest, we showcased our new workflow which uses openQA and staging projects. We discussed what we try to achieve what we can already do right now with osc/obs/openQA combo. People were quite enthusiastic and two people in the audience already used Factory!

As always we mentioned that we have Easy hacks and we really really want people to work on them.

Overall SUSE presence

openSUSE/SUSE presence was as usual high on this event so we tried our best to let people know that we are cool as project and great as company. We shared with everyone our openSUSE/SLE dvds, posters, stickers, … During the talks/workshops we also gave out SUSE swag as presents to people who answered some tricky questions.

Job offerings

We let everyone know that we are looking for plenty of new people in various departements. Namely QA/QAM to which few people got interested and took the printed out prospects. So hopefully our teams will grow 🙂

getting my DVB-T card to work

March 6th, 2014 by bmwiedemannToday I tried to get a DVB-T card to work with a new antenna on a new 13.1 install.

I know it was working, because I ran it with 12.3 on this machine last year.

hwinfo –tv

showed

Model: “Hauppauge computer works WinTV HVR-1110”

Vendor: pci 0x1131 “Philips Semiconductors”

Device: pci 0x7133 “SAA7131/SAA7133/SAA7135 Video Broadcast Decoder”

So after plugging everything in, I started kaffeine, which still knew about all local channels, but could not tune.

http://www.linuxtv.org/wiki/index.php/Hauppauge_WinTV-HVR-1110 gave the important hint that one needs a firmware file. After that was in /lib/firmware/dvb-fe-tda10046.fw and after a reboot, came the next try. kaffeine now would show 99% SNR, so a good signal and even know about what is currently on air, however picture remained black.

kaffeine hinted that it needs extra software, but could not find it, even though packman repos were available (annoying bug).

http://opensuse-community.org/ finally helped – I needed the libxine2-codecs package from the packman repo.

Now everything is working after less than an hour.